Imagine a camera that can see around corners.

Researchers spent years figuring out how to use lasers to basically turn solid walls into mirrors. Now engineers from Rice University, Princeton University and Southern Methodist University have harnessed the power of a type of artificial intelligence known as deep learning to create a new laser-based system that captures detailed images of objects around corners in real time.

With further development, this type of technology might allow self-driving cars to "look" around parked cars or busy intersections to see hazards or pedestrians. It could also be installed on satellites and spacecraft for tasks such as capturing images inside a cave on an asteroid.

"Compared to other approaches, our non-line-of-sight imaging system provides uniquely high resolutions and imaging speeds," said Stanford University's Chris Metzler '13, lead author of a new study about work he conducted during his Ph.D. studies at Rice's Brown School of Engineering. "These attributes enable applications that wouldn't otherwise be possible, such as reading the license plate of a hidden car as it's driving or reading a badge worn by someone walking on the other side of a corner."

The paper is available online in the Optical Society journal Optica. In it, Metzler and Rice co-authors Ashok Veeraraghavan and Richard Baraniuk and colleagues report that their imaging system can distinguish submillimeter details of a hidden object from 1 meter away.

Seeing around corners

Metzler, who holds three degrees in electrical and computer engineering from Rice, said the system is designed to image small objects at very high resolutions but can be combined with other imaging systems that produce low-resolution, room-sized reconstructions.

Veeraraghavan was part of the team that first demonstrated non-line-of-sight imaging around corners in 2012. That demonstration used a technique called "time-of-flight" imaging to essentially convert normal, opaque walls into mirrors.

Here's how it works. Light from a high-speed laser bounces off the wall and into the hidden area. The light also bounces off objects in the hidden area, and some of that light is reflected back to the wall, where it's again reflected back to the camera. By measuring precisely how long it takes the reflected light to return to the camera -- the time-of-flight -- the imager can construct a coarse image of the hidden area.

"The main advance in time-of-flight imaging since 2012 is that you can now build systems that cost several thousand dollars rather than the original equipment, which cost more than $1 million," Veeraraghavan said. "The technology is very good at imaging a wide area, like a room-scale scene, at very course resolution of about a centimeter or so. Any features or objects smaller than that become completely blurred."

Solving an optics problem with deep learning

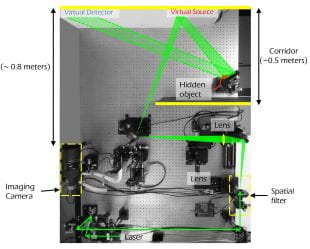

The "deep-inverse correlography" imaging system described this week by Metzler, Veeraraghavan, Baraniuk and colleagues uses a commercially available camera sensor and a powerful, but otherwise standard, laser source similar to the one found in a laser pointer. Like the time-of-flight approach, the laser beam is bounced off a visible wall onto the hidden object and then back to the wall. But rather than measuring how long it takes the light to return, the new system looks for an interference pattern on the wall that's known as a speckle pattern.

Veeraraghavan said the waves of light striking the wall from different directions interact with one another, much like ripples on the surface of a pond. In some locations, the waves cancel one another and in other areas they resonate with one another and become amplified.

"This constructive and destructive interference produces a highly random intensity pattern," he said. "This pattern contains a lot of information about the structure that created it."

Reconstructing the hidden object from the speckle pattern requires solving a challenging computational problem. Short exposure times are necessary for real-time imaging but produce too much noise for existing algorithms to work. To solve this problem, the researchers turned to deep learning.

"Compared to other approaches for non-line-of-sight imaging, our deep learning algorithm is far more robust to noise and thus can operate with much shorter exposure times," said study co-author Prasanna Rangarajan of SMU. "By accurately characterizing the noise, we were able to synthesize data to train the algorithm to solve the reconstruction problem using deep learning without having to capture costly experimental training data."

Imaging in detail

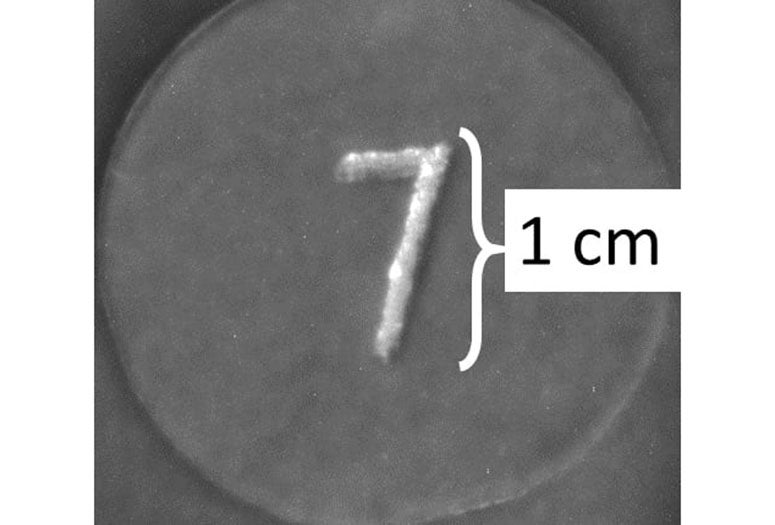

The researchers tested the new technique by reconstructing images of 1-centimeter-tall letters and numbers hidden behind a corner using an imaging setup about 1 meter from the wall. Using an exposure length of a quarter of a second, the approach produced reconstructions with a resolution of 300 microns.

"So we're talking about a much smaller field of view compared to time-of-flight approaches -- a field of view that's on the order of a few inches as opposed to a few meters," Veeraraghavan said. "But within this narrow field of regard, we are able to achieve a spatial resolution that's about 100 times more detailed than time-of-flight."

Moving forward, Veeraraghavan, Baraniuk and their collaborators hope to marry the two approaches in a single system. To illustrate how the system might work, Veeraraghavan used the example of photographing the faces or ID badges of specific people in a crowded room.

"With time-of-flight you can get a coarse image that shows where people are," he said. "Once you have localized their ID badges or their faces, then you could use correlography, the technique that is the focus of this paper, to then get a very high-resolution image of just that small localized volume."

The research is part of the Defense Advanced Research Projects Agency's Revolutionary Enhancement of Visibility by Exploiting Active Light-fields program, or REVEAL, which is developing a variety of techniques to image hidden objects around corners.